European Geosciences Union (EGU) General Assembly 2024

Vienna, Austria

Come see live demos of Real-Time Detection of Adulteration in Spices at the American Spice Trade Association (ASTA) Annual Meeting, 16-18 April in Tucson. On the same days, you can instead see our Remote-Sensing turnkey and payload packages at the EARSeL Workshop on Imaging Spectroscopy in Valencia, Spain.

Home » News

Vienna, Austria

7000 N Resort Dr, Tucson, AZ 85750

Plaza Virgen de la Paz, 3, Ciutat Vella, 46001, Valencia, Spain

Learn about an ongoing mission flying lightweight UAVs with hyperspectral imaging systems over hops fields in Bavaria. Two webinars were hosted on March 12th, 2024, and we created a single recording that combines the best presentations and both sets of Q&A.

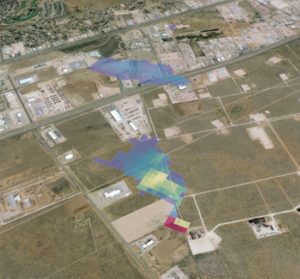

Headwall hyperspectral imaging sensors for MethaneAIR serve as a precursor to MethaneSAT capturing data from space on methane gas emissions, a critical factor in assessing and mitigating climate change.

We want to know what you think about the Headwall Nano HP VNIR hyperspectral imaging system and the Headwall Co-Aligned HP VNIR-SWIR imaging system! Please take a short survey (<2 min) that will help you contribute to product development. Thank you!

John Margeson, Headwall Product Manager Remote Sensing, shows how easy it is to use the Headwall Nano HP VNIR™ hyperspectral imaging sensor with the DJI Matrice 350. In fact, it is the only hyperspectral imaging payload with integrated LiDAR that is ready to fly on the M350.

Using the MV.C NIR and the perClass Mira Stage and Software. Traditional textile sorting methods are prone to errors with fabrics that have similar densities and air resistances. Chemical sorting offers high accuracy, but requires destruction of the current materials, and is unavailable for some fabrics (such as wool).

Thank you to everyone who stopped by the Headwall, perClass, Holographix, and inno-spec booths at SPIE Photonics West!

Copyright 2023 © All Right Reserved Design by Cloud a la Carte